vLLM

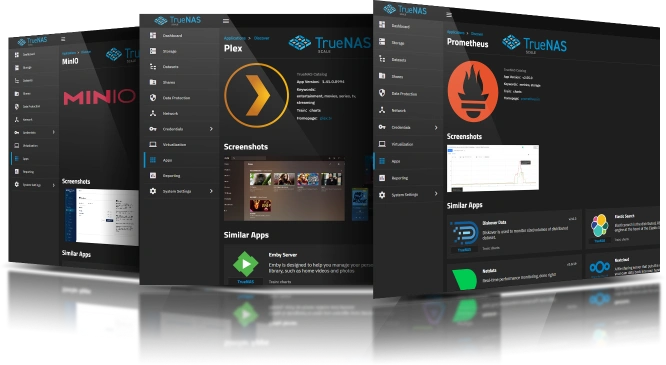

Get Started with Apps!

Keywords: ai, llm, inference, openai, gpu

Train: Community

Home Page: https://github.com/vllm-project/vllm

Added: 2026-04-30

Last Updated: 2026-05-12

vLLM is a high-throughput and memory-efficient inference and serving engine for LLMs with an OpenAI-compatible API.

Run as Context- Container [vllm] can run as any non-root user and group.

Group: 568 / Host group is [apps]

User: 568 / Host user is [apps]

App Metadata (Raw File)

{

"1.0.3": {

"healthy": true,

"supported": true,

"healthy_error": null,

"location": "/__w/apps/apps/trains/community/vllm/1.0.3",

"last_update": "2026-05-12 11:48:53",

"required_features": [],

"human_version": "v0.20.2_1.0.3",

"version": "1.0.3",

"app_metadata": {

"app_version": "v0.20.2",

"capabilities": [],

"categories": [

"ai"

],

"changelog_url": "https://github.com/vllm-project/vllm/releases",

"date_added": "2026-04-30",

"description": "vLLM is a high-throughput and memory-efficient inference and serving engine for LLMs with an OpenAI-compatible API.",

"home": "https://github.com/vllm-project/vllm",

"host_mounts": [],

"icon": "https://media.sys.truenas.net/apps/vllm/icons/icon.svg",

"keywords": [

"ai",

"llm",

"inference",

"openai",

"gpu"

],

"lib_version": "2.3.5",

"lib_version_hash": "c0d042e3de6350fae9aee546d3c79bc0accf1f2011da89cc1ebff29421aa7fc9",

"maintainers": [

{

"email": "dev@truenas.com",

"name": "truenas",

"url": "https://www.truenas.com/"

}

],

"name": "vllm",

"run_as_context": [

{

"description": "Container [vllm] can run as any non-root user and group.",

"gid": 568,

"group_name": "Host group is [apps]",

"uid": 568,

"user_name": "Host user is [apps]"

}

],

"screenshots": [],

"sources": [

"https://apps.truenas.com/catalog/vllm_community/",

"https://github.com/vllm-project/vllm"

],

"title": "vLLM",

"train": "community",

"version": "1.0.3"

},

"schema": {

"groups": [

{

"name": "vLLM Configuration",

"description": "Configure vLLM"

},

{

"name": "User and Group Configuration",

"description": "Configure User and Group for vLLM"

},

{

"name": "Network Configuration",

"description": "Configure Network for vLLM"

},

{

"name": "Storage Configuration",

"description": "Configure Storage for vLLM"

},

{

"name": "Labels Configuration",

"description": "Configure Labels for vLLM"

},

{

"name": "Resources Configuration",

"description": "Configure Resources for vLLM"

}

],

"questions": [

{

"variable": "vllm",

"label": "",

"group": "vLLM Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "image_selector",

"label": "Image Selector",

"description": "Image to use",

"schema": {

"type": "string",

"default": "image",

"required": true,

"enum": [

{

"value": "image",

"description": "NVIDIA CUDA Image"

},

{

"value": "rocm_image",

"description": "AMD ROCm Image"

},

{

"value": "cpu_image",

"description": "CPU Image"

}

]

}

},

{

"variable": "model_name",

"label": "Model Name",

"description": "The HuggingFace model ID to serve (e.g. Qwen/Qwen3-0.6B).",

"schema": {

"type": "string",

"required": true,

"default": ""

}

},

{

"variable": "max_model_len",

"label": "Max Model Length",

"description": "Maximum sequence length for the model. Lower values reduce memory usage.",

"schema": {

"type": "int",

"required": false,

"null": true,

"default": null

}

},

{

"variable": "hf_token",

"label": "HuggingFace Token",

"description": "The HuggingFace token for accessing gated models.",

"schema": {

"type": "string",

"default": "",

"private": true

}

},

{

"variable": "additional_envs",

"label": "Additional Environment Variables",

"schema": {

"type": "list",

"default": [],

"items": [

{

"variable": "env",

"label": "Environment Variable",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "name",

"label": "Name",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "value",

"label": "Value",

"schema": {

"type": "string"

}

}

]

}

}

]

}

}

]

}

},

{

"variable": "run_as",

"label": "",

"group": "User and Group Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "user",

"label": "User ID",

"description": "The user id that vLLM files will be owned by.",

"schema": {

"type": "int",

"min": 568,

"default": 568,

"required": true

}

},

{

"variable": "group",

"label": "Group ID",

"description": "The group id that vLLM files will be owned by.",

"schema": {

"type": "int",

"min": 568,

"default": 568,

"required": true

}

}

]

}

},

{

"variable": "network",

"label": "",

"group": "Network Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "web_port",

"label": "Web Port",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "bind_mode",

"label": "Port Bind Mode",

"description": "Publish port on the host for external access.\nExpose port for inter-container communication.\nNone - No external access.\n",

"schema": {

"type": "string",

"default": "published",

"enum": [

{

"value": "published",

"description": "Publish port on the host for external access"

},

{

"value": "exposed",

"description": "Expose port for inter-container communication"

},

{

"value": "",

"description": "None"

}

]

}

},

{

"variable": "port_number",

"label": "Port Number",

"schema": {

"type": "int",

"default": 30422,

"min": 1,

"max": 65535,

"required": true

}

},

{

"variable": "host_ips",

"label": "Host IPs",

"description": "IPs on the host to bind this port",

"schema": {

"type": "list",

"show_if": [

[

"bind_mode",

"=",

"published"

]

],

"default": [],

"items": [

{

"variable": "host_ip",

"label": "Host IP",

"schema": {

"type": "string",

"required": true,

"$ref": [

"definitions/node_bind_ip"

]

}

}

]

}

}

]

}

},

{

"variable": "networks",

"label": "Networks",

"description": "The docker networks to join",

"schema": {

"type": "list",

"show_if": [

[

"host_network",

"=",

false

]

],

"default": [],

"items": [

{

"variable": "network",

"label": "Network",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "name",

"label": "Name",

"description": "The network name. It will be created if it does not exist.\nOr you can reference an existing network.\n",

"schema": {

"type": "string",

"default": "",

"required": true

}

},

{

"variable": "containers",

"label": "Containers",

"description": "The containers to add to this network.",

"schema": {

"type": "list",

"items": [

{

"variable": "container",

"label": "Container",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "name",

"label": "Container Name",

"schema": {

"type": "string",

"required": true,

"enum": [

{

"value": "vllm",

"description": "vllm"

}

]

}

},

{

"variable": "config",

"label": "Container Network Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "aliases",

"label": "Aliases (Optional)",

"description": "The network aliases to use for this container on this network.",

"schema": {

"type": "list",

"default": [],

"items": [

{

"variable": "alias",

"label": "Alias",

"schema": {

"type": "string"

}

}

]

}

},

{

"variable": "interface_name",

"label": "Interface Name (Optional)",

"description": "The network interface name to use for this network",

"schema": {

"type": "string"

}

},

{

"variable": "mac_address",

"label": "MAC Address (Optional)",

"description": "The MAC address to use for this network interface.",

"schema": {

"type": "string"

}

},

{

"variable": "ipv4_address",

"label": "IPv4 Address (Optional)",

"description": "The IPv4 address to use for this network interface.",

"schema": {

"type": "string"

}

},

{

"variable": "ipv6_address",

"label": "IPv6 Address (Optional)",

"description": "The IPv6 address to use for this network interface.",

"schema": {

"type": "string"

}

},

{

"variable": "gw_priority",

"label": "Gateway Priority (Optional)",

"description": "Indicates the priority of the gateway for this network interface.",

"schema": {

"type": "int",

"null": true

}

},

{

"variable": "priority",

"label": "Priority (Optional)",

"description": "Indicates in which order Compose connects the service's containers to its networks.",

"schema": {

"type": "int",

"null": true

}

}

]

}

}

]

}

}

]

}

}

]

}

}

]

}

},

{

"variable": "host_network",

"label": "Host Network",

"description": "Enable host network to allow vLLM to communicate with other services\non the same host without port mapping.\n",

"schema": {

"type": "boolean",

"default": false

}

}

]

}

},

{

"variable": "storage",

"label": "",

"group": "Storage Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "data",

"label": "Data Storage",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "type",

"label": "Type",

"description": "Host Path (Path that already exists on the system).\nixVolume (Dataset created automatically by the system).\n",

"schema": {

"type": "string",

"required": true,

"default": "ix_volume",

"enum": [

{

"value": "host_path",

"description": "Host Path (Path that already exists on the system)"

},

{

"value": "ix_volume",

"description": "ixVolume (Dataset created automatically by the system)"

}

]

}

},

{

"variable": "ix_volume_config",

"label": "ixVolume Configuration",

"description": "The configuration for the ixVolume dataset.",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"ix_volume"

]

],

"$ref": [

"normalize/ix_volume"

],

"attrs": [

{

"variable": "acl_enable",

"label": "Enable ACL",

"description": "Enable ACL for the storage.",

"schema": {

"type": "boolean",

"default": false

}

},

{

"variable": "dataset_name",

"label": "Dataset Name",

"description": "The name of the dataset to use for storage.",

"schema": {

"type": "string",

"required": true,

"hidden": true,

"default": "data"

}

},

{

"variable": "acl_entries",

"label": "ACL Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"acl_enable",

"=",

true

]

],

"attrs": []

}

}

]

}

},

{

"variable": "host_path_config",

"label": "Host Path Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"host_path"

]

],

"attrs": [

{

"variable": "acl_enable",

"label": "Enable ACL",

"description": "Enable ACL for the storage.",

"schema": {

"type": "boolean",

"default": false

}

},

{

"variable": "acl",

"label": "ACL Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"acl_enable",

"=",

true

]

],

"attrs": [],

"$ref": [

"normalize/acl"

]

}

},

{

"variable": "path",

"label": "Host Path",

"description": "The host path to use for storage.",

"schema": {

"type": "hostpath",

"show_if": [

[

"acl_enable",

"=",

false

]

],

"required": true

}

}

]

}

}

]

}

},

{

"variable": "additional_storage",

"label": "Additional Storage",

"schema": {

"type": "list",

"default": [],

"items": [

{

"variable": "storage_entry",

"label": "Storage Entry",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "type",

"label": "Type",

"description": "Host Path (Path that already exists on the system).\nixVolume (Dataset created automatically by the system).\nSMB/CIFS Share (Mounts a volume to a SMB share).\nNFS Share (Mounts a volume to a NFS share).\n",

"schema": {

"type": "string",

"required": true,

"default": "ix_volume",

"enum": [

{

"value": "host_path",

"description": "Host Path (Path that already exists on the system)"

},

{

"value": "ix_volume",

"description": "ixVolume (Dataset created automatically by the system)"

},

{

"value": "cifs",

"description": "SMB/CIFS Share (Mounts a volume to a SMB share)"

},

{

"value": "nfs",

"description": "NFS Share (Mounts a volume to a NFS share)"

}

]

}

},

{

"variable": "read_only",

"label": "Read Only",

"description": "Mount the volume as read only.",

"schema": {

"type": "boolean",

"default": false

}

},

{

"variable": "mount_path",

"label": "Mount Path",

"description": "The path inside the container to mount the storage.",

"schema": {

"type": "path",

"required": true

}

},

{

"variable": "host_path_config",

"label": "Host Path Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"host_path"

]

],

"attrs": [

{

"variable": "acl_enable",

"label": "Enable ACL",

"description": "Enable ACL for the storage.",

"schema": {

"type": "boolean",

"default": false

}

},

{

"variable": "acl",

"label": "ACL Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"acl_enable",

"=",

true

]

],

"attrs": [],

"$ref": [

"normalize/acl"

]

}

},

{

"variable": "path",

"label": "Host Path",

"description": "The host path to use for storage.",

"schema": {

"type": "hostpath",

"show_if": [

[

"acl_enable",

"=",

false

]

],

"required": true

}

}

]

}

},

{

"variable": "ix_volume_config",

"label": "ixVolume Configuration",

"description": "The configuration for the ixVolume dataset.",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"ix_volume"

]

],

"$ref": [

"normalize/ix_volume"

],

"attrs": [

{

"variable": "acl_enable",

"label": "Enable ACL",

"description": "Enable ACL for the storage.",

"schema": {

"type": "boolean",

"default": false

}

},

{

"variable": "dataset_name",

"label": "Dataset Name",

"description": "The name of the dataset to use for storage.",

"schema": {

"type": "string",

"required": true,

"default": "storage_entry"

}

},

{

"variable": "acl_entries",

"label": "ACL Configuration",

"schema": {

"type": "dict",

"show_if": [

[

"acl_enable",

"=",

true

]

],

"attrs": [],

"$ref": [

"normalize/acl"

]

}

}

]

}

},

{

"variable": "cifs_config",

"label": "SMB Configuration",

"description": "The configuration for the SMB dataset.",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"cifs"

]

],

"attrs": [

{

"variable": "server",

"label": "Server",

"description": "The server to mount the SMB share.",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "path",

"label": "Path",

"description": "The path to mount the SMB share.",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "username",

"label": "Username",

"description": "The username to use for the SMB share.",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "password",

"label": "Password",

"description": "The password to use for the SMB share.",

"schema": {

"type": "string",

"required": true,

"private": true

}

},

{

"variable": "domain",

"label": "Domain",

"description": "The domain to use for the SMB share.",

"schema": {

"type": "string"

}

}

]

}

},

{

"variable": "nfs_config",

"label": "NFS Configuration",

"description": "The configuration for the NFS dataset.",

"schema": {

"type": "dict",

"show_if": [

[

"type",

"=",

"nfs"

]

],

"attrs": [

{

"variable": "server",

"label": "Server",

"description": "The server to mount the NFS share.",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "path",

"label": "Path",

"description": "The path to mount the NFS share.",

"schema": {

"type": "string",

"required": true

}

}

]

}

}

]

}

}

]

}

}

]

}

},

{

"variable": "labels",

"label": "",

"group": "Labels Configuration",

"schema": {

"type": "list",

"default": [],

"items": [

{

"variable": "label",

"label": "Label",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "key",

"label": "Key",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "value",

"label": "Value",

"schema": {

"type": "string",

"required": true

}

},

{

"variable": "containers",

"label": "Containers",

"description": "Containers where the label should be applied",

"schema": {

"type": "list",

"items": [

{

"variable": "container",

"label": "Container",

"schema": {

"type": "string",

"required": true,

"enum": [

{

"value": "vllm",

"description": "vllm"

}

]

}

}

]

}

}

]

}

}

]

}

},

{

"variable": "resources",

"label": "",

"group": "Resources Configuration",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "limits",

"label": "Limits",

"schema": {

"type": "dict",

"attrs": [

{

"variable": "cpus",

"label": "CPUs",

"description": "CPUs limit for vLLM.",

"schema": {

"type": "int",

"default": 2,

"required": true

}

},

{

"variable": "memory",

"label": "Memory (in MB)",

"description": "Memory limit for vLLM.",

"schema": {

"type": "int",

"default": 4096,

"required": true

}

}

]

}

},

{

"variable": "gpus",

"group": "Resources Configuration",

"label": "GPU Configuration",

"schema": {

"type": "dict",

"$ref": [

"definitions/gpu_configuration"

],

"attrs": []

}

}

]

}

}

]

},

"readme": "<h1>vLLM</h1> <p><a href=\"https://github.com/vllm-project/vllm\">vLLM</a> is a high-throughput and memory-efficient inference and serving engine for LLMs with an OpenAI-compatible API.</p>",

"changelog": null,

"chart_metadata": {

"app_version": "v0.20.2",

"capabilities": [],

"categories": [

"ai"

],

"changelog_url": "https://github.com/vllm-project/vllm/releases",

"date_added": "2026-04-30",

"description": "vLLM is a high-throughput and memory-efficient inference and serving engine for LLMs with an OpenAI-compatible API.",

"home": "https://github.com/vllm-project/vllm",

"host_mounts": [],

"icon": "https://media.sys.truenas.net/apps/vllm/icons/icon.svg",

"keywords": [

"ai",

"llm",

"inference",

"openai",

"gpu"

],

"lib_version": "2.3.5",

"lib_version_hash": "c0d042e3de6350fae9aee546d3c79bc0accf1f2011da89cc1ebff29421aa7fc9",

"maintainers": [

{

"email": "dev@truenas.com",

"name": "truenas",

"url": "https://www.truenas.com/"

}

],

"name": "vllm",

"run_as_context": [

{

"description": "Container [vllm] can run as any non-root user and group.",

"gid": 568,

"group_name": "Host group is [apps]",

"uid": 568,

"user_name": "Host user is [apps]"

}

],

"screenshots": [],

"sources": [

"https://apps.truenas.com/catalog/vllm_community/",

"https://github.com/vllm-project/vllm"

],

"title": "vLLM",

"train": "community",

"version": "1.0.3"

}

}

}Support, maintenance, and documentation for applications within the Community catalog is handled by the TrueNAS community. The TrueNAS Applications Market hosts but does not validate or maintain any linked resources associated with this app.

There currently aren’t any resources available for this application!

Please help the TrueNAS community add resources here or discuss this application in the TrueNAS Community forum.